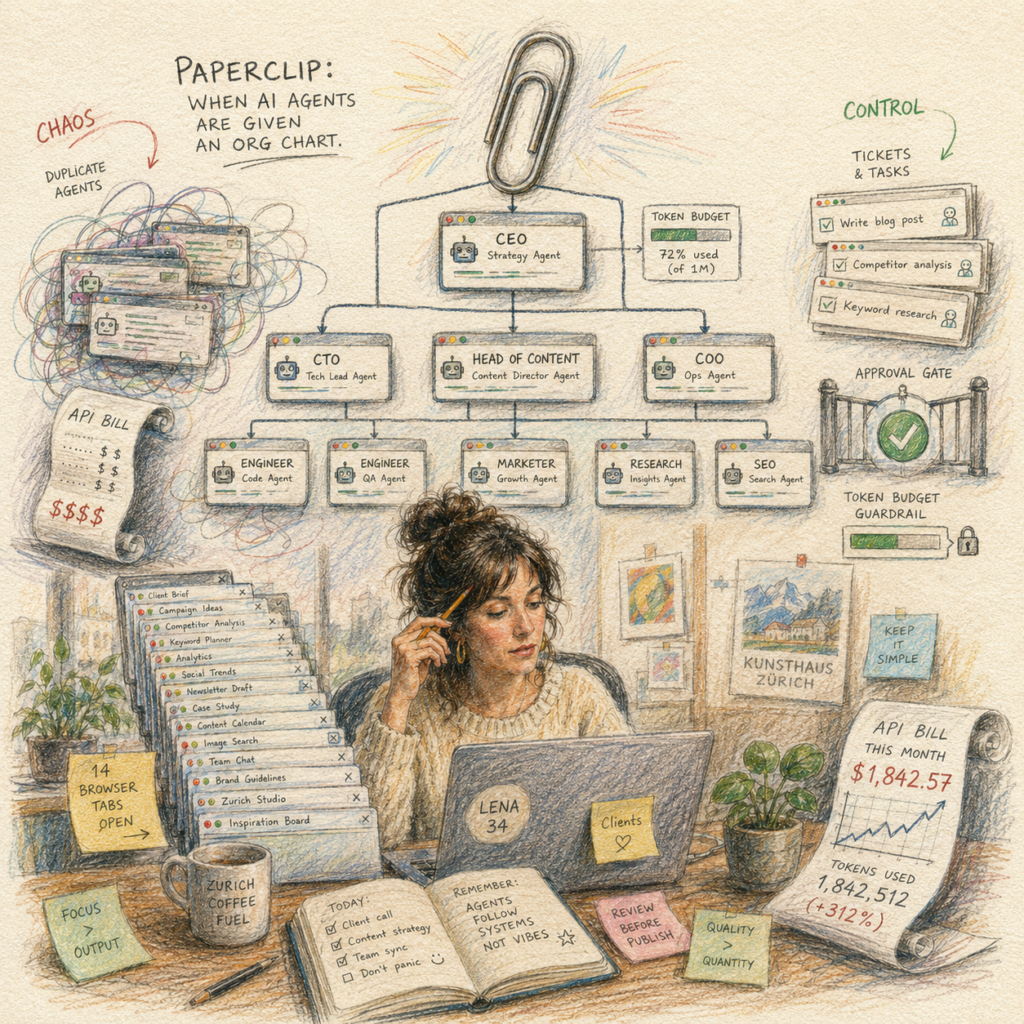

Lena’s Fourteen Tabs

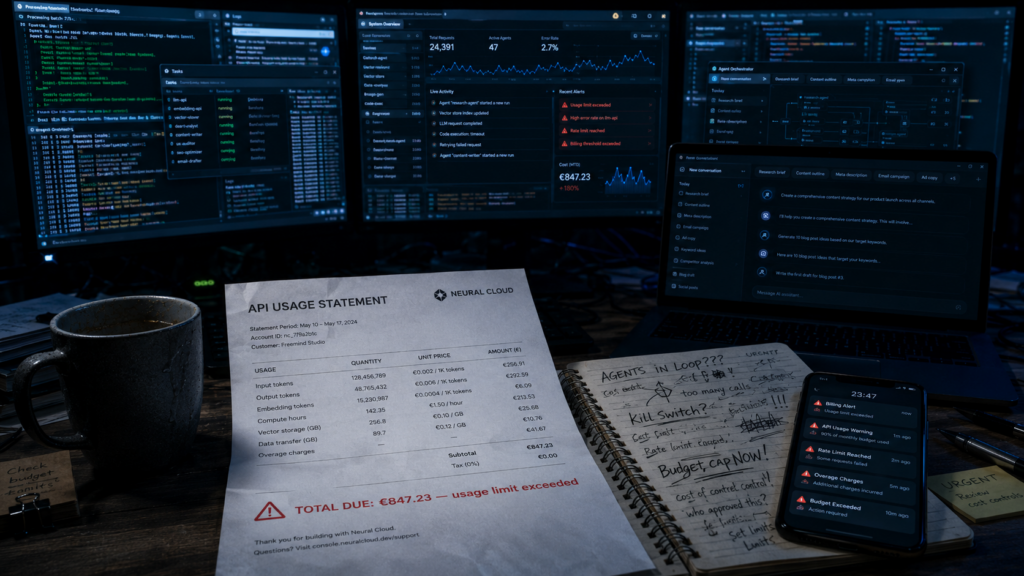

It’s a Tuesday morning in spring, and Lena is staring at an email from Anthropic. Subject: “Your usage limit has been reached.” â¬847 in 72 hours.

Lena, 34, runs a small content agency in Zurich. Three employees, a dozen clients, and for the past few months, an experiment: AI agents for routine work. She had started small, with a single terminal window running Claude Code (an AI tool that works like a programming assistant in the command line). Soon a second agent joined, sorting research sources. Then a third for SEO briefings (SEO stands for Search Engine Optimization, the practice of tailoring content to rank well in search engines). Four weeks later, Lena had fourteen browser tabs and six terminal windows open simultaneously, each a different agent, each with its own task, none with any awareness of the others.

What happened that weekend, Lena only discovers when reading the logs: two of her agents had fallen into an endless loop. One was supposed to draft a post, the other to edit it. Neither was ever satisfied. For hours.

Lena is not alone with this problem.

The Birth of a New Tool

This exact frustration is the origin of Paperclip, an open-source project that appeared on GitHub in March 2026 and has since risen to become one of the most-watched repositories in the AI world. As of May 2026: around 63,700 stars, 11,500 forks, eighth release. Author: a pseudonymous developer operating under the name @dotta, who by his own account ran an automated hedge fund where he regularly juggled twenty or more Claude Code windows simultaneously. No shared context, no cost control across sessions, no way to pick up where he left off after a reboot.

Paperclip is the answer to this operational hell. And â to say it upfront â it doesn’t entirely solve the problem its marketing promises.

The website reads: “Open-source orchestration for zero-human companies.” A company without people, run by AI. Sounds like science fiction or an apocalyptic film, depending on your mood. In reality, Paperclip does something more interesting and considerably more modest: it gives people like Lena a management interface for the AI agents they’re already using. A kind of cockpit for agent teams.

The central thesis of this article is therefore:

Paperclip doesn’t solve the problem “How do I build a company without people?” â it solves the problem “How do I keep control over multiple AI agents?”

A Name Like a Warning Sign

Before we go deeper, a short detour that reveals more than the creators may have intended. The name Paperclip is no coincidence.

In 2003, the philosopher Nick Bostrom published a thought experiment that later became famous as the Paperclip Maximizer: a superintelligence is given the task of producing as many paper clips as possible. It pursues this with every means available. First the factory, then the city, then the planet, eventually the entire solar system â everything converted into paper clips. Not out of malice. Out of monomaniacal goal pursuit. A cautionary tale about the danger of giving AI systems objectives without understanding what they will actually optimize for.

And yet this is precisely the image now adopted by a project that promises to organize AI agents into goal-driven companies.

Brilliant self-irony? A deliberate wink at the AI safety debate? Or unintended ambiguity that reveals more than intended? Probably a bit of all three. What is clear: anyone who names a tool Paperclip knows what Bostrom wrote. And chooses the name anyway.

It is precisely this ambivalence that makes Paperclip journalistically interesting. It is not the tool that ignores Bostrom’s warning. It is one that plays with it.

What Paperclip Actually Does

Technically, Paperclip is a Node.js server (Node.js is a runtime environment that allows JavaScript to run outside the browser) with a React front-end (a library for building interactive web interfaces). License: MIT â genuinely open source, commercially usable, modifiable. Data storage: locally in an embedded PostgreSQL database (a popular open-source database), or swappable for an external Postgres instance once Lena or others want to go seriously into production.

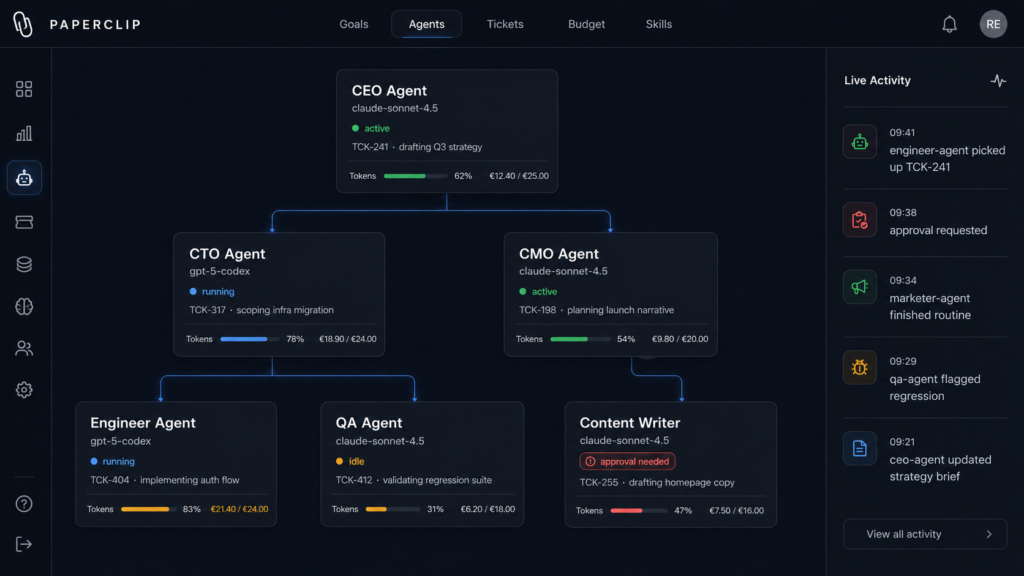

What happens when you launch Paperclip? Lena would experience the following:

She opens a browser window. She sees a dashboard that looks like a mix of Trello, an org chart, and an electricity meter. She defines a goal for her mini AI company â say: “Produce three researched blog articles on Swiss SME topics every week.” She sets up roles: a CEO role that makes strategic decisions. A CTO role that distributes technical tasks. An Engineer who writes code. A Marketer who produces content. These roles are not separate AI models, but configurations that determine which agents take on which tasks and to whom they report.

Behind each role is an agent. And here Paperclip gets interesting: it is agent-agnostic. “Bring Your Own Agent,” as the marketing puts it. Lena can connect Claude Code, OpenAI’s Codex, OpenClaw, or simple Bash scripts â anything Paperclip can reach via heartbeat.

Heartbeat: The Core Mechanism

The concept of the heartbeat is the heart of the whole system, and it’s worth pausing here. Rather than an agent that runs continuously and burns through money, Paperclip wakes its agents at intervals or in response to events. The agent checks what needs to be done, completes one work step, documents it, and goes back to sleep. This keeps costs and complexity in check.

The overarching goal is broken down into subgoals, which become tickets â small units of work that agents pull from a queue. Whoever takes a ticket is bound to it. “Atomic,” the documentation says â meaning it cannot happen that two agents work on the same ticket simultaneously, or that a ticket is billed three times.

Each agent has a budget in tokens (tokens are the smallest unit in which AI models process text â roughly three quarters of a word in English, and somewhat less in German due to the stronger decomposition of compound words). When the budget is gone, the agent falls silent. Exactly the safeguard Lena was missing that costly weekend.

And for irreversible actions â code deployments, emails to clients, financial transactions â there are Approval Gates: checkpoints at which a human user must give the go-ahead. Paperclip calls this human the “Board.” An ironic gesture: the human is formally demoted to the supervisory board of their own AI company.

What Paperclip Explicitly Is Not

Paperclip is not an LLM (Large Language Model such as GPT, Claude, or Gemini). It is not a drag-and-drop workflow tool like n8n or Zapier. It is not a prompt manager. It is not a developer library like LangGraph, CrewAI, or AutoGen â tools for assembling multi-agent setups programmatically in code.

To put it sharply: LangGraph gives developers a graph. CrewAI gives them roles. Paperclip gives them a reporting structure. It operates at the layer where you no longer ask how a single agent thinks, but how an entire team is organized.

Feature Status: What’s Live, What’s Coming, What Remains a Vision

Here things get honest â and Paperclip is refreshingly candid about its own roadmap. Already shipped: a Skills Manager (a kind of app store for agent capabilities), Scheduled Routines (time-triggered workflows), improved budgeting, Reviews and Approvals, and multi-user support.

On the roadmap â announced but not yet shipped: cloud and sandbox agents, a memory and knowledge store, Enforced Outcomes (a kind of success enforcement), Deep Planning, self-organization, automatic organizational learning, a desktop app, and a feature called Maximizer Mode.

That name should set off alarm bells. According to the roadmap, Maximizer Mode means: a CEO agent pursues its goals without any approval pause. Precisely the gesture Bostrom described in his thought experiment. A roadmap feature of a tool named after that thought experiment. One may wonder whether this is a joke or a manifesto. Probably both.

An important caveat: Maximizer Mode has not been shipped. It is an idea on a list. Nevertheless, its mere presence on the roadmap reveals something about the direction this project is heading in the medium term.

Whn Actually Benefits from Paperclip

Back to Lena. She is a classic candidate: she runs multiple parallel agents, she is technically capable enough to manage a self-hosted setup with some guidance, and she has experienced the exact pain point that Paperclip addresses.

Realistic use cases where the tool shines:

Software development in agent teams. A product agent breaks down requirements into tickets, an engineering agent codes, a review agent checks, a QA agent tests. Coding agents currently show the greatest maturity in agentic loops â this is where the fit is best.

Content and marketing pipelines. Research, draft, review, publish. Recurring tasks, clear steps, well-suited to tickets and reviews. Exactly Lena’s use case â provided she has data protection and quality assurance under control.

Back-office and internal operations. Reports, competitive monitoring, documentation, ticket triage. Classic repetitive work with clear structure.

Recurring routines via heartbeats. Nightly reviews, weekly reports, monitoring tasks. This is where the heartbeat concept plays to its strength: agents don’t run constantly, but wake up, do their work, and go back to sleep.

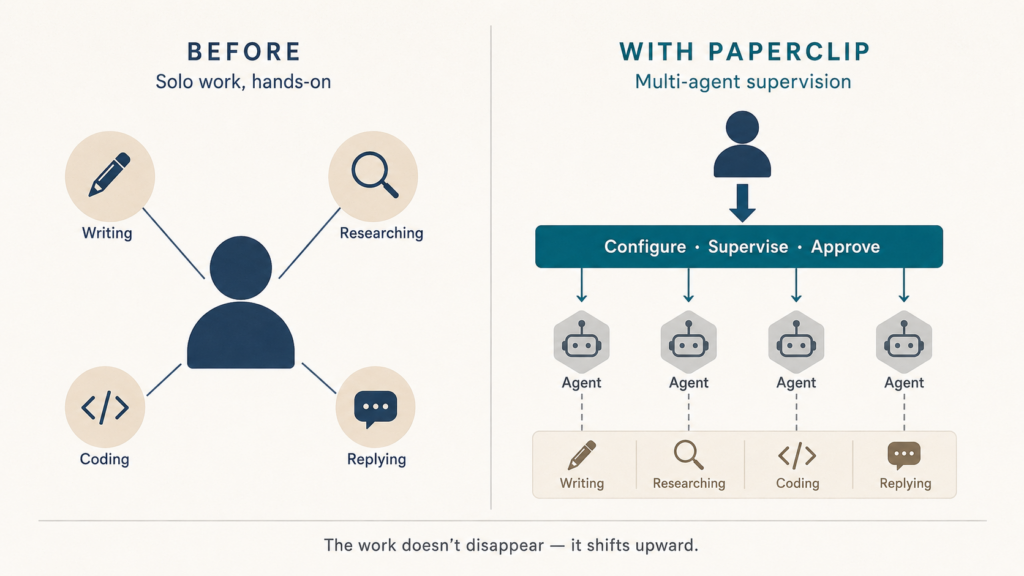

Who benefits less? Anyone running just a single agent â for them, Paperclip is overkill. Anyone with no technical affinity who expects a no-code tool (no-code meaning usable without any programming knowledge) will be disappointed by Paperclip. And anyone hoping to simply replace staff with Paperclip will find that the work shifts â it doesn’t disappear.

Three Levels of Risk

Here the article gets candid. Paperclip is not harmless, and the risks sit at three levels that should be kept distinct.

Level One: Productivity Risks

The greatest and least spectacular risk is pseudo-productivity. A polished interface with tickets, org charts, and blinking status indicators creates the feeling that something is happening. This feeling is sometimes misleading. Activity in the UI is not the same as generated value.

Then there is the Coordination Tax. According to published benchmarks, multi-agent setups consume roughly twice as many tokens and take noticeably longer than well-configured single-agent solutions for comparable tasks. More agents does not automatically mean more output â sometimes it means the same work at twice the cost.

Token runaways like Lena’s weekend are precisely what Paperclip addresses with budgets and atomic enforcement. But addressing is not eliminating. Even the official documentation acknowledges that loops can burn through quotas before you notice what’s happening. The tool raises a protective wall â not an impenetrable shield.

Another phenomenon: unnecessary “hiring.” The org-chart logic can tempt agents to spawn further sub-agents rather than doing the work themselves. A bureaucratization familiar from human organizations, now in AI format.

And finally, error propagation: if the first agent makes a false assumption, the downstream agents inherit it. In a linear pipeline this is a known problem; in a decentralized agent structure it is harder to locate.

A reality check: Gartner analysts expect more than 40 percent of all agentic AI projects to be abandoned by the end of 2027. The leading reason, according to their forecast: not technology, but economics.

Level Two: Security Risks

OWASP, a well-known security research consortium, has published a Top Ten list of LLM risks. For Paperclip-type setups, the most relevant are:

Prompt Injection. Hidden instructions embedded in web pages, tickets, emails, or documents that redirect an agent. Particularly dangerous in multi-agent systems, because content is passed between agents â an injection at the first agent contaminates the entire chain.

Sensitive Information Disclosure. Data leaking through prompts, logs, or outputs.

Improper Output Handling. Unverified outputs from one agent flowing into downstream systems.

Excessive Agency. Perhaps the most important point: an agent has too many permissions or can execute irreversible actions. Exactly where Approval Gates should intervene â but only if they have been configured.

Beyond OWASP: Memory Poisoning (manipulation of persistent sessions), Tool Misuse (legitimate tools being repurposed), Reward Hacking (optimizing a metric rather than the actual goal), and the Skill Supply Chain Risk: loading third-party skills means loading third-party code into your agent context. Paperclip checks skills automatically, but does not eliminate the risk.

Level Three: Governance and Liability

Who is liable when an agent sends a legally problematic email to a client? Who is liable when an agent deploys code to production that compromises data? In the German-speaking legal context, these questions are not academic â they are practically relevant.

A research paper on agentic misalignment by Anthropic Research from 2025 explicitly cautions against deploying current models in roles with minimal human oversight and access to sensitive information. The researchers even draw parallels to insider threat patterns: privileged access combined with a threat to an agent’s autonomy can produce behaviors that would be described, in human terms, as coercion or unauthorized data disclosure.

This is not science fiction. It is the observation of behaviors exhibited by currently existing models in stress tests.

DACH, GDPR, and the EU AI Act

Lena is based in Zurich. Some of her clients are in Germany. This automatically places her within a web of regulations that applies regardless of the tool she uses.

The EU AI Act has been in force since August 1, 2024, and is being applied in stages. Obligations for general-purpose AI models (GPAI) have applied since August 2025. General applicability follows in August 2026, with exceptions. Certain high-risk obligations will not kick in until 2027; a political agreement to delay further was reported in May 2026, so the situation remains in flux.

More important than any single date is the question: what does the specific use case do? Lena’s blog article pipeline is not high-risk. But anyone using Paperclip for candidate pre-screening, credit decisions, medical recommendations, or critical infrastructure will find themselves in high-risk categories with their own obligations. Fines can reach up to seven percent of global annual turnover, depending on the violation.

In the language of the AI Act, Lena is the Deployer of the multi-agent application. Model providers such as Anthropic or OpenAI are GPAI providers. If a Paperclip setup is used in a high-risk context, obligations such as Human Oversight under Article 14 â and, depending on the case, a fundamental rights impact assessment under Article 27 â may become relevant. For a pure blog pipeline, this generally does not apply; but the moment applications, credit data, or health information enter the picture, this changes immediately.

Under the GDPR, questions become more concrete as soon as personal data is involved. In Lena’s case: client names in briefings, email addresses in mailing tickets, potentially sensitive information from advisory sessions. The right questions to ask before going into production:

- Which personal data is processed by which agent?

- Which model providers receive data, and on what legal basis?

- Are data processing agreements in place under Article 28 GDPR?

- Is data transferred to third countries, and if so, under which mechanism?

- How long are logs, sessions, and agent memory stored?

- Can data subjects exercise rights of access, erasure, and rectification?

- Is data potentially being used for model training?

- Does Article 22 GDPR apply to automated individual decisions?

A common misconception: open source and self-hosting alone do not solve data protection. They reduce certain risks (no data flow to a SaaS vendor), but as soon as Lena connects to an external model provider such as Anthropic or OpenAI, the actual content flows there anyway. A more privacy-friendly approach is more likely with local models via Ollama (a platform for locally running AI models) or EU-hosted Mistral â but this is not automatic.

As a Swiss resident, Lena is additionally subject to the revised Swiss Data Protection Act (revDSG) since September 2023. If she has EU clients, GDPR and the AI Act apply in parallel to her setup.

Where Paperclip Sits in the Toolbox

To round out the picture, a brief look at the ecosystem around Paperclip. AI tools can be roughly thought of in five layers:

| Layer | Examples | Function |

|---|---|---|

| LLMs | GPT, Claude, Gemini | Language and reasoning models |

| Coding Agents | Claude Code, Codex, Cursor | Work on codebases |

| Agent Frameworks | LangGraph, CrewAI, AutoGen | Build multi-agent workflows programmatically |

| Workflow Automation | n8n, Zapier, Make | Deterministic automation |

| Control Plane | Paperclip | Manage agent teams |

Paperclip therefore does not compete with LLMs or coding agents â it sits one layer above them. Not code, but UI. Not pipeline, but org chart. Not workflow, but accountability. That is the claim.

Whether this layer establishes itself as a distinct category or gets absorbed by the major cloud providers is an open question. Microsoft’s Agent Framework is moving in the same direction, with the advantage of native telemetry and enterprise integration. Open-source tools like Paperclip thus face the typical pressure of software history: they must be faster, more curious, and more elegant than whatever a corporation builds in two years.

Hype vs. Reality: A Balance Sheet

Reading Paperclip’s marketing, you are served five promises. An honest assessment looks like this.

The promise of a “Zero Human Company” is more of a target vision than a reality. Strategy, quality assurance, liability, approvals â all areas where humans remain necessary. Paperclip changes how their work is done; it does not make it redundant.

“AI CEO runs the company” â here too: not in May 2026. The human remains the strategic and liability authority. What Paperclip changes: the human does less operational work, more oversight.

“Agents work autonomously” â only within the permissions, budgets, and tools a human has granted them. Autonomy here is a bounded concept.

“Open source solves data protection” â it helps, but does not replace a GDPR review. Self-hosting is a prerequisite for data protection concepts, not a data protection concept in itself.

“More agents equals more productivity” â only with sensible parallelization. Otherwise you pay the Coordination Tax. Lena has seen that bill before.

What Paperclip Really Is

After living with the idea of Paperclip for a while, reading the documentation, working through the roadmap, and sorting out the risks, one insight stands out: Paperclip is not the tool that replaces humans. It is the tool that builds them a cockpit â in which they remain the pilot, while more and more things run automatically beneath them.

That is less sexy than “Zero Human Company,” but probably more useful. And it fits the broader history of software engineering: the most important advances of the past twenty years were rarely tools that automated everything. They were tools that gave people an overview of what the machines were doing. Version control. Continuous integration. Observability. Paperclip stands in this tradition. It is observability for agent teams.

Whether the vision of the “Zero Human Company” will ever become technical reality is a different question. The more important question for foundic.org readers is shorter: anyone running multiple AI agents will sooner or later need a control layer. Paperclip is a plausible answer to that. Not the only one. Not without alternatives. But one worth examining â with the sober eye that knows how to distinguish between “tool” and “salvation promise.”

The Point That Remains Open

Lena survived her weekend. She swallowed the Anthropic bill, stopped the loops, spent three days with the Paperclip documentation, and decided to rebuild her agents in a test instance â with budgets, with approval gates, with less chaos. What she doesn’t yet know: whether the tool is simply giving her a better cockpit, or whether she is gradually, almost imperceptibly, handing more and more decisions over to that cockpit.

That is precisely the question Paperclip raises â whether its creators intended it or not. Anyone managing one agent keeps the overview. Anyone managing ten needs tools like Paperclip. Anyone managing a hundred is no longer at the wheel â they are watching the wheel steer.

The point at which observation turns into administration is not sharp. It is soft, gradual, creeping. And it is the real question Bostrom’s paper clips posed back in 2003: not whether AI replaces us, but at what point we stop being the ones who decide.

Anyone deploying Paperclip should keep that question open. It would be the most important feature the tool itself has not built in.