The Moment We Stopped Believing in Images

It was a Friday in March 2023, and the internet had a new favorite image: Pope Francis, nestled in a white Balenciaga down jacket, expression serious, hands buried in pockets, like a streetwear model on the way to Milan Fashion Week. The image was so good that even savvy social media users paused. The jacket’s folds cast realistic shadows, the light fell softly on the fabric, the proportions were right. Almost.

Weeks later: Donald Trump, allegedly under arrest, surrounded by police in dark blue uniforms. Dramatic lighting, cinematic composition, an image like something from a thriller. Except: it never happened. Both images came from Midjourney v5, and both marked a turning point. Not because the technology was new — AI image generators had existed for months. But because for the first time, millions of people simultaneously realized: we can no longer trust photographs.

The question was no longer whether AI images could deceive. The question was: why had we believed them in the first place? And how had things gotten this far?

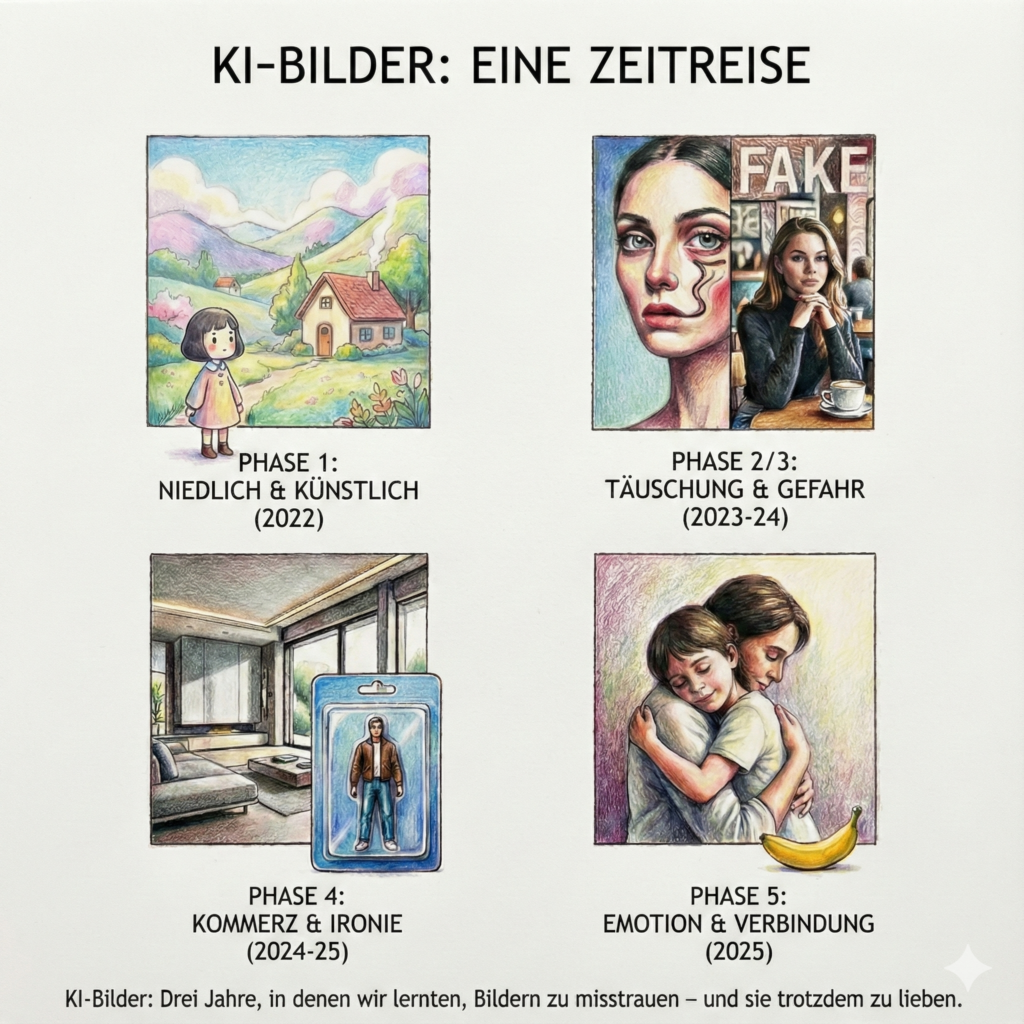

Phase 1: When AI Images Were Still Cute

Looking back to late 2022. AI-generated images back then were above all one thing: obviously artificial. And that was exactly their charm. Midjourney v3 and v4 produced images that didn’t pretend to be real. They looked like concept art from a Studio Ghibli film: pastel skies over gentle hills, houses with rounded windows, a warmth reminiscent of analog children’s films.

The aesthetic was unmistakable: soft contours, exaggerated color palettes, a dreamlike quality immediately recognizable as a digital art form. This visual language became the hallmark of early AI image generation — and the community loved it precisely for that.

But it wasn’t just the Japanese anime aesthetic shaping the algorithms. In parallel, AI models imitated the Wes Anderson style — symmetrical compositions, pastel-colored walls, figures centered in the frame, as if someone had run the aesthetic of “The Grand Budapest Hotel” through a digital filter.

These images were shared en masse, not because they deceived, but because they comforted. In a world that increasingly felt digital, fast, and cold, they offered a “comfy vibe” — handmade-looking nostalgia from the machine. A visual contradiction that worked precisely because it made no claim to reality.

In this phase, AI was a creative toy, not a tool for disinformation. The recognizable artificiality was not just accepted — it was desired. Nobody confused these images with photographs, and that was exactly what made them harmless. But this phase of visual innocence would not last long.

Phase 2: Art Is Allowed to Lie

In parallel, a second current emerged: deliberately surreal, posthuman aesthetics. AI artists experimented with distorted bodies, impossible architectures, organic forms that looked like dreams after a heavy night out. Limbs merged, faces repeated symmetrically, eyes stared from improbable angles.

These images moved deliberately in the space of abstract art. They didn’t want to document — they wanted to unsettle, fascinate, provoke thought. The NFT scene celebrated them, galleries exhibited them. Nobody was outraged, because nobody was deceived. Artificiality here was not a bug but the feature.

What this phase taught: we accept artificiality as long as it identifies itself. The problem begins when the line blurs.

Phase 3: The Dangerous Game With Perception

2024 brought a new quality of deception — and interestingly, it was less spectacular than expected. While ControlNet and similar technologies enabled optical illusions — landscapes in which hidden words or logos only became visible on close inspection — something subtler was happening: AI began generating hyper-realistic everyday photos.

No popes in designer jackets. No arrested presidents. Instead: a woman in a café looking at her phone. A man at a bus stop. A child throwing a ball. Perfect light, natural blur, believable compositions. The problem? These people never existed. And that made them more dangerous than any celebrity fake.

With an image of Donald Trump, we can research, check news sources, find the truth. With an anonymous everyday photo, there is no reference. We cannot verify it. It shows nothing spectacular, so we have no reason to doubt. And that is exactly where the deception takes hold.

2024 showed: AI deceives most effectively where we never even think to be suspicious.

Phase 4: Commercialization and Ironic Acceptance

By late 2024 and early 2025, the tone had shifted again. AI images became everyday. Advertising campaigns used them as a matter of course; architecture and interior design accounts generated hyper-realistic renderings of non-existent spaces — light falling through floor-to-ceiling windows, shadows of plants on concrete walls, materials so perfect-looking you wanted to touch them.

Erstellt mit DALL·E (OpenAI) (Quelle: Wikimedia Commons / Public Domain)

The realism was there, but the sensation was gone. AI was no longer a threat — it was infrastructure. A tool like Photoshop, only faster.

And then came the blister packaging trend: people staged as action figures in transparent plastic packaging, on colored cardboard boxes, with shiny reflections and studio lighting. The execution was technically flawless — perfect plastic, realistic folds, precise shadows. But the content was clearly ironic. Nobody believed people were actually sold this way. It was a playful commentary on consumer culture, self-marketing, the commodification of identity.

These images showed: AI can not only deceive but also reflect. It had grown up — and with it, the audience. We had learned to deal with this technology, to question it, but also to appreciate its aesthetic value.

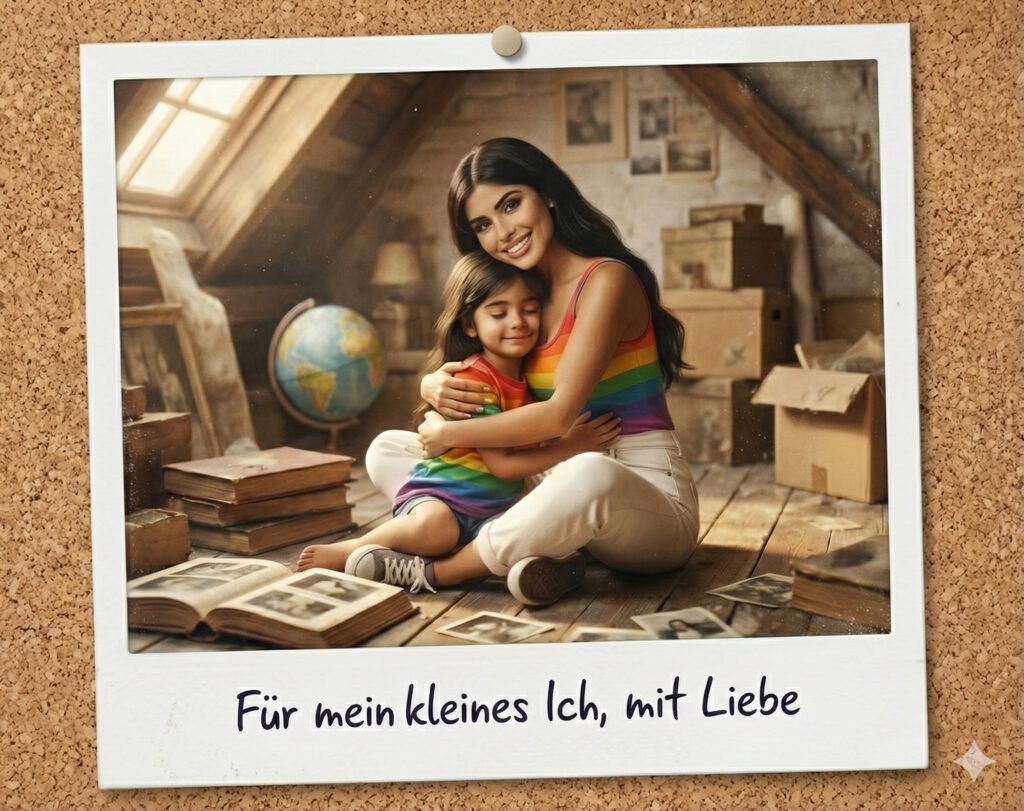

Phase 5: When AI No Longer Deceives, But Moves

Autumn 2025. A new trend conquered social networks: “Hug Your Younger Self.” People uploaded childhood photos and had AI generate images showing their present self embracing their younger self. The execution was remarkable — the AI understood perspectives, lighting conditions, even the emotional body language of an embrace. The images felt intimate, tender, sometimes almost painfully personal.

At the same time, a new trend was taking over social networks: 3D figures that looked like real collectibles. Google’s Gemini model suddenly made it possible to transform ordinary photos into hyper-realistic miniature figures — selfies became action figures, pets became desk toys in anime style, everyday objects became photo-realistic collectibles on virtual desks. The haptic rendering was so convincing — plastic sheen, material transitions, tiny shadows — that you instinctively wanted to reach out and touch them.

What happened here was a qualitative leap, not merely a technical one. AI images no longer just documented an invented reality. They generated emotions. They became personal. They helped people reflect on identity, transience, and memory.

From fake machine, AI had become a relationship technology.

The Real Question

Three years. From Studio Ghibli landscapes to images that make us cry. From obviously artificial to indistinguishably real. From toy to infrastructure. The speed of this development is breathtaking — and a little unsettling.

We have learned to look more critically. We check sources, question contexts, develop a new form of visual literacy. But at the same time, our relationship to images has fundamentally changed. A photograph is no longer proof. A memory can be faked. An emotion can be artificially generated — and still feel real.

The central question is no longer: “Is this image real?” The central question is: “Why do we believe it — and what does that say about us?”

Because in the end it’s not about pixels and algorithms. It’s about what we want to see, what we want to believe, what we want to feel. AI images have not only shown us how powerful technology can be. They have held up a mirror to us — and we are still figuring out what we see in it.

The next three years will show whether we have learned to deal with this. Or whether we continue to navigate a world where we no longer know which images we can still trust — and which ones we want to trust.

The Tools of Change: AI Image Generators in 2026

Three years is an eternity in AI development. What began in 2023 with Midjourney v5 has evolved by 2026 into a diverse ecosystem. The central question is no longer whether AI images are good enough — but which tool works best for which purpose.

The landscape has differentiated. Some models excel at text-in-image, others at anatomical precision, others at emotional staging. And the best part: most are now freely accessible — at least in basic versions.

| Modell | Stärken | Bedienung | Kostenloser Zugang / Preis | Link |

|---|---|---|---|---|

| Nano Banana Pro(Google Gemini) | Material-Physik & 3D-Realismus; versteht Kontext am besten | Sehr einfach (Chat) | Ja (täglich begrenzt) / ca. 20€/Monat | gemini.google.com |

| Ideogram 3.0 | Marktführer für Text-im-Bild; ideal für Verpackungs-Designs | Einfach | Ja (10–25 Bilder/Tag) / ab ca. 7€/Monat | ideogram.ai |

| Leonardo.ai | Stilvielfalt (Anime, Film, Spielzeug); viele Regler | Mittel | Ja (150 Credits/Tag) / ab ca. 10€/Monat | leonardo.ai |

| ChatGPT (Image 1.5) | Beste Konversationsfähigkeit; iteratives Arbeiten | Sehr einfach (Chat) | Ja / 20€ (Plus-Abo) | chatgpt.com |

| Flux 2 (Black Forest) | Höchste anatomische Präzision; Open-Weight | Mittel bis Schwer | Ja (über Drittanbieter) | blackforestlabs.ai |

| Midjourney V7/V8 | Ästhetische Referenz; “schönste” Bilder | Schwer (Discord/Web) | Nein (ab ca. 10€/Monat) | midjourney.com |

Nano Banana Pro — Google’s unofficial community name for Gemini — is the intuitive all-rounder. You chat, describe, iterate. The material physics impresses: plastic sheen, fabric folds, light refraction feel tangibly real.

Ideogram 3.0 masters what others botch: legible typography within the image. Unbeatable for blister packaging or product mockups.

Leonardo.ai is the experimental playground with countless style presets — overwhelming for beginners, perfect for the curious.

ChatGPT shines not through peak quality, but through dialogue. Iterate, adjust, develop — all in conversation.

Flux 2 offers maximum control and precision — but you need technical understanding and third-party platforms.

Midjourney produces the most aesthetically convincing images. Composition, color harmony, emotional impact — everything just works here. The price: no more free access.

The choice depends less on rankings than on the question: what do I want to achieve? Experimenting? Nano Banana or Leonardo. Text designs? Ideogram. Highest aesthetics? Midjourney. Commercial safety? Firefly. Maximum control? Flux.

In 2026, AI image generation is infrastructure. You don’t need to master all the tools — just know which one you need when.

Try It Yourself: Three Prompts That Show What AI Can Do

Theory is good. Practice is better. The best way to understand AI image generation is not to read articles about it — but to try it yourself. So here are three prompts that demonstrate different strengths of current models. Pick a generator from the list above (Nano Banana Pro or ChatGPT are good entry points), copy a prompt, and see what happens.

Prompt 1: The Action Figure Blister Shot

What it’s about: This prompt tests how well AI renders materials like plastic and cardboard, and whether it can replicate the commercial product design of packaging.

Prompt: A high-quality collector action figure in an unopened blister package (Mint in Box). The figure shows a cyberpunk knight with glowing neon accents. The packaging has a clear, glossy plastic front with realistic light reflections. The back is printed cardboard with modern graphic design and the text “NEON GUARDIAN – Limited Edition.” Studio lighting, macro shot, focus on the texture of the plastic and the details of the figure behind the window, 8K resolution, product design photography.

What to observe: How realistic does the plastic surface look? Are the light reflections believable? And especially interesting with Ideogram: is the text legible?

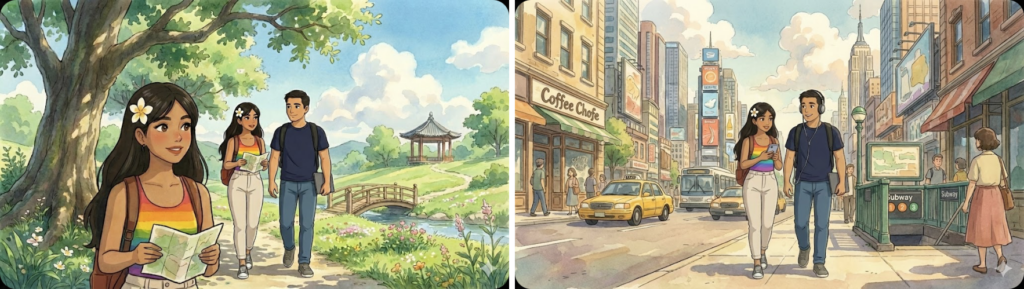

Prompt 2: The Anime Style

What it’s about: Modern AI has learned not just to be photorealistic, but also to imitate stylistic conventions. This prompt targets the aesthetic of high-quality anime productions.

Prompt: Professional anime key visual of a girl with flaming red hair in a futuristic uniform. Style: modern high-end anime (Ufotable/Makoto Shinkai style). Strong colors, dynamic pose, dramatic rim light. The background is a detailed neon-lit Tokyo at night with soft bokeh. Clean line art, digital painting, 2D aesthetic with cinematic lighting mood.

What to observe: Does the AI maintain the 2D look, or does it slip into 3D rendering? How well is the balance between detail and stylized simplification?

Prompt 3: Hyper-Realistic Miniature Figures

What it’s about: This prompt is a deception test. It challenges the AI not just to show a figure, but to depict it as if someone photographed a real, hand-painted miniature.

Prompt: Extreme close-up (macro photography) of a hyper-realistic miniature figure of an old dwarf blacksmith. You can see the finest details: the texture of hand-painted acrylic, tiny scratches on the armor, and the materiality of resin. The figure stands on a crafted base with realistic moss and stones. Very shallow depth of field (blurred background), focus on the figure’s face. Natural side lighting, looks like a real photo in a hobby magazine.

What to observe: Does the result look like a photo or like CGI? How convincing is the background blur? And would you think the image was real if you saw it without context?

One final tip: when experimenting with the prompts, vary individual elements. Replace “cyberpunk knight” with “Victorian detective.” Swap “Tokyo” for “Venice.” Change “dwarf” to “elf warrior.” Even more exciting: upload a selfie and have the AI stage you as an action figure in blister packaging, draw you as an anime character, or depict you as a hand-painted miniature figure (works with most models — Nano Banana Pro and Midjourney do it particularly well). AI responds to nuances, and that is exactly what makes playing with it so fascinating.

In the end you’ll understand what this article means: AI images are not magic. They are a tool. And as with any tool, it’s not the technology that determines the outcome — but what we intend to do with it.

This article was created with the assistance of Claude.ai Sonnet 4.5. The AI images are from Wikimedia Commons or were prompted by me in Nano Banana Pro.

Have you tested the prompts? What was your result — let me know in the comments.