What’s It About?

Companies running large AI models often face the problem of inefficient GPU utilization. An innovative architectural approach called Disaggregated Inference could solve this challenge: by splitting inference workloads across two specialized GPU pools, resource utilization can be significantly improved. A real-world example shows how a large retailer using a 70-billion-parameter model for product searches was able to save between $600,000 and $800,000 annually through this approach.

Background & Context

The inefficiency of conventional GPU infrastructures for AI inference stems from the bimodal nature of the workload. While prompt processing — reading and understanding the request — is computationally intensive and demands high GPU load, the subsequent token generation requires far less compute but is memory-intensive. With monolithic approaches, this leads to significant underutilization: the GPU runs practically idle during the generation phase, even though it remains occupied. Analyses of GPU utilization clearly show this discrepancy: during the prompt phase there is peak load, while actual compute activity drops dramatically during the generation phase. This explains why high GPU hours do not automatically mean efficient resource utilization.

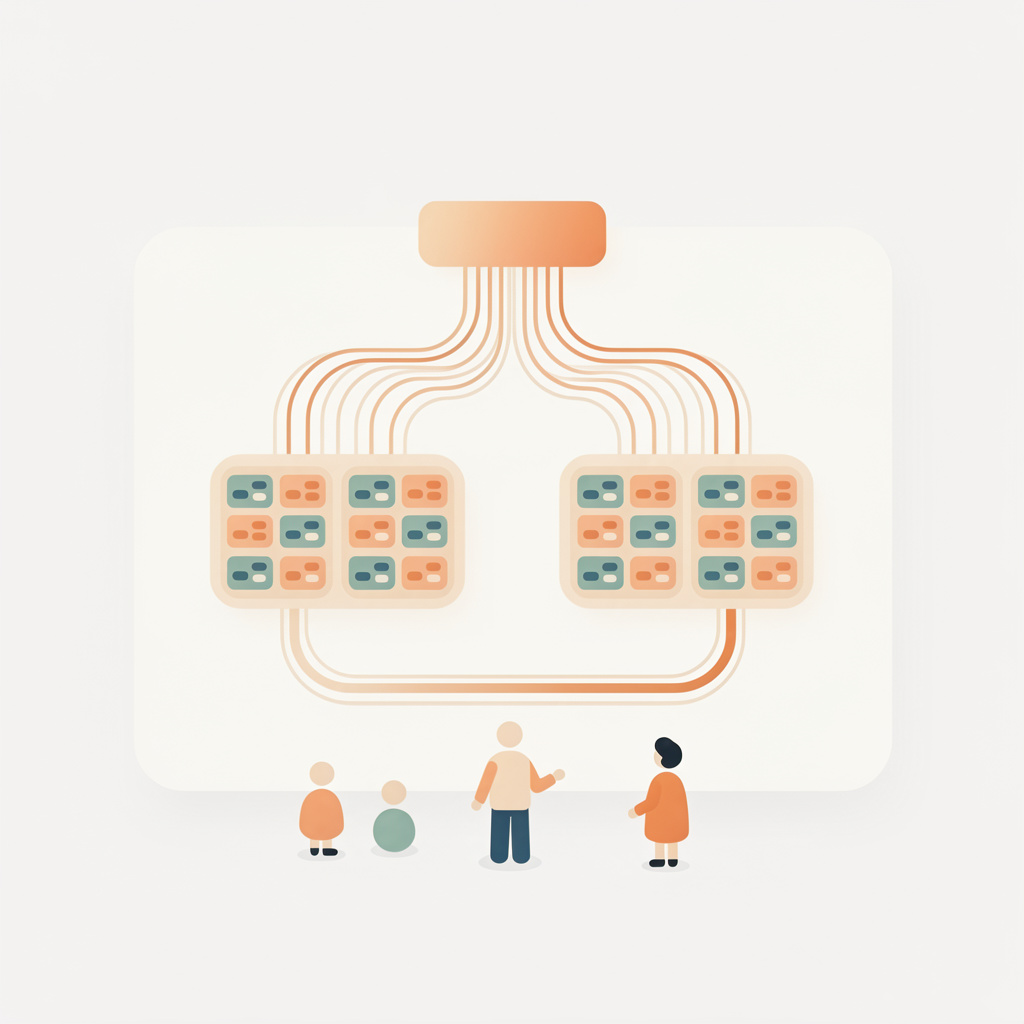

Disaggregated Inference addresses this problem by separating the workload: one pool handles the pulsating prompt processing, a second handles continuous token generation. The technical implementation uses an intelligent routing layer that analyzes incoming requests and forwards them to the appropriate pool. Orchestration frameworks like NVIDIA’s Dynamo support this architecture. The approach fits into a broader trend: as AI applications become more widespread, infrastructure efficiency is increasingly in focus, as evidenced by NVIDIA’s recent Blackwell generation, which explicitly targets efficiency improvements.

What Does This Mean?

- Cost optimization: Companies can significantly reduce their GPU costs for AI inference without compromising performance — in real-world cases by several hundred thousand dollars annually.

- Improved user experience: The architecture leads to more consistent token rates and stable response quality, which directly reflects in application performance.

- Scalability: The separation makes it possible to scale each pool independently and align it optimally with its specific task — an important advantage for growing AI workloads.

- Infrastructure planning: For the years 2025 to 2030, GPU capacity planning is increasingly becoming strategically relevant. Disaggregated Inference offers a way to better utilize existing resources before additional hardware is purchased.

- Broader applicability: The approach is especially relevant for companies with high inference volumes, but could also help smaller organizations that are deliberately focused on efficiency gains.

Sources

- Double GPU Efficiency – at No Extra Cost (Computerwoche)

- NVIDIA Focuses on Efficiency Rather Than Pure Computing Power with Blackwell (Ad-Hoc-News)

- AI Infrastructure Capacity Planning and GPU Forecasting 2025–2030 (Introl)

- GPUs for AI Workloads (Google Cloud)

This article was created with AI assistance and is based on the cited sources and the language model’s training data.

Further Reading: From Text Generator to Digital Employee: How AI Is Changing the World in Four Stages