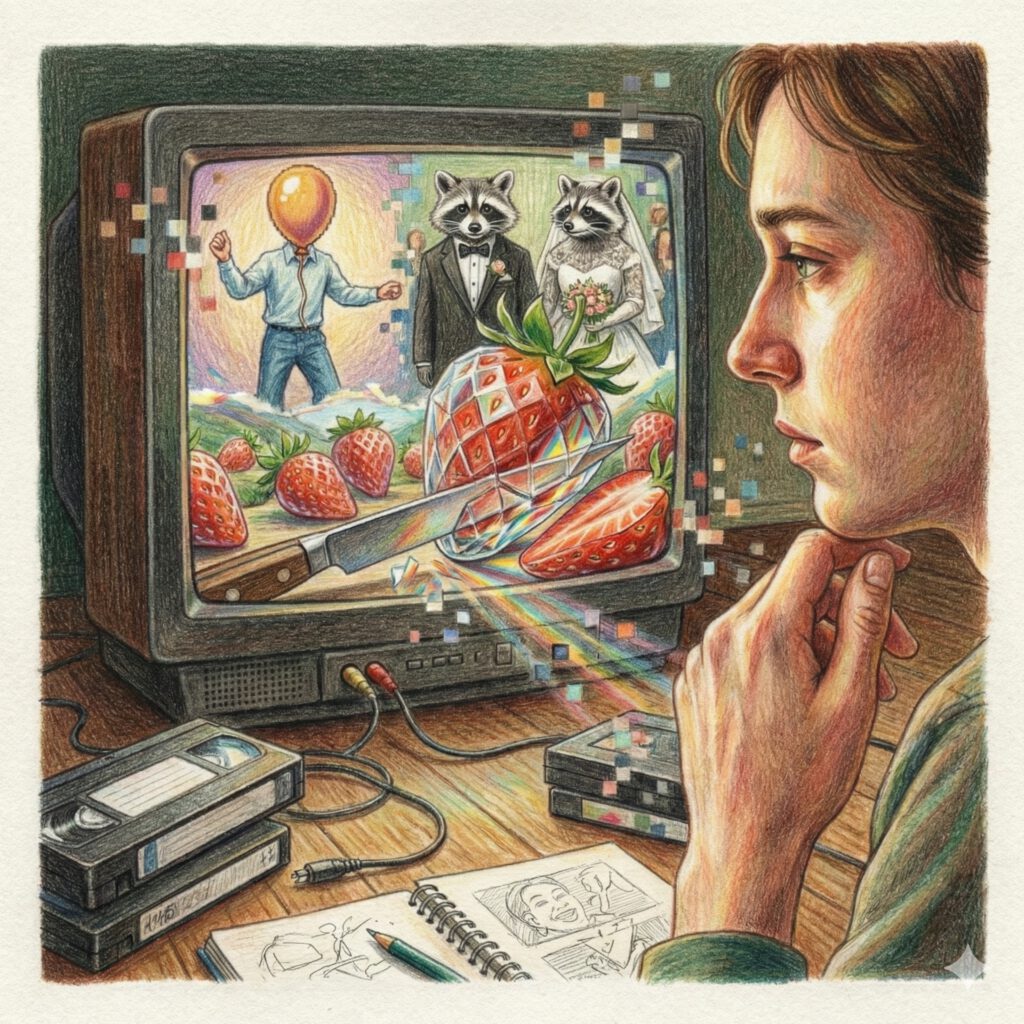

There are those strange moments on the internet when you pause, tilt your head, and wonder: “Wait — is this video real?”

Between 2016 and today, January 2026, such moments have become daily routine. AI videos carry names like “CCTV clip,” “doorbell camera,” or “glass fruit ASMR.” They show jumping rabbits, cats with banjos, glass strawberries, or people with balloon heads. What has happened in less than a decade cannot simply be described as technological progress. It is more of a visual decoupling: the image has detached itself from light, the camera has become optional — and our relationship to reality has been fundamentally renegotiated.

Welcome to a journey through ten years in which the act of seeing itself was up for debate.

The Backstory: The Era of Talking Heads (2016–2021)

To understand where we stand today, you have to look back to a time when AI video meant something fundamentally different. Between 2016 and 2018, research labs were experimenting with so-called lip-sync models. The idea was simple: take existing film footage and recalculate mouths so they match a different language. The goal was synchronization, not creativity.

The term “deepfake” first appeared on shady Reddit forums, accompanied by a diffuse mix of unease and technical fascination. Yet visually, everything remained surprisingly limited: no new worlds, no movement through space — just faces that almost worked.

When Synthesia went public in 2019, it was a milestone for businesses. Suddenly avatars could deliver presentations, explain compliance rules, or conduct product training. In dozens of languages. Efficient, scalable, cost-effective. But also: sterile. These digital speakers were correct but lifeless. They lacked the tremor, the hesitation, the micro-disorder of real life. They looked like well-groomed news anchors from the uncanny valley.

It was still clear: AI replaces humans in front of the camera. Not the camera itself.

2022: The Year AI Learned to Dream

Then came 2022 — and with it a paradigm shift. In July, CogVideo appeared, a Chinese open-source project. Rough, unwieldy, technically demanding. But revolutionary. For the first time, pure text generated movement over time.

Shortly after, a race among tech giants began. Meta presented Make-A-Video, Google responded with Phenaki and Imagen Video. The results were short, noisy, fragmented — like dreams you can’t quite remember. But they showed something fundamental: AI now understood concepts. Not just pixels, not just frames. But actions. A “teddy bear painting” remained a painting teddy bear across several seconds. The timeline had gained meaning.

This was no longer just a tech demo. It was the promise of a new visual language.

2023: Runway and the Disappearance of the Camera

The real breakthrough didn’t come from a closed lab, but from a browser. In February 2023, Runway opened up text-to-video to the public with Gen-1. But Gen-2, released in March, was the true turning point: suddenly anyone with an internet connection could generate videos from text.

Not perfect. Not by a long shot. Arms grew too numerous, faces melted, backgrounds morphed without regard for physical logic. But it didn’t matter. The internet did what it always does: it started playing. The camera was no longer a physical object — it was a thought you could type.

For the first time, AI video was no longer a privilege. It was a tool. Democratized, chaotic, untamed.

2024: The Illusion of Reality — From Digital Cinema to the End of Proof

Sora and the Silent Shock

When OpenAI introduced Sora in spring 2024, something fundamental changed. Not euphorically, not loudly — but quietly.

The AI video — the clip that made the world pause — was called “Tokyo Street Walk.” A woman in a black leather jacket walks through rain-soaked, neon-lit Tokyo. Lights reflect in puddles. The camera follows her, pans slightly, breathes. It was no spectacle of effects. No gag. Just: world. A scene that could have come from a Wong Kar-wai film. The shock ran deep because nothing about it screamed “AI.” No exaggeration, no morphing faces, no physical errors. Just reality that had never existed.

Shortly after, “Air Head” followed from the production company Shy Kids — a poetic short film about a man with a yellow balloon as a head. Consistent, narrative, emotional. For the first time, AI video was not a demo but cinema.

The Age of Fake Surveillance Cameras

While Sora attacked high-gloss cinema, something even more powerful emerged in the niches of social media: a genre that exploited our primal trust in poor image quality.

It started with a seemingly mundane clip: rabbits jumping on a trampoline, filmed from the perspective of a surveillance camera. Mediocre resolution, static angle — exactly the visual vocabulary we’ve trusted blindly for decades. The video went viral because it looked so unspectacular. Only upon close analysis did it become clear: completely AI-generated.

Shortly after, the genre exploded: cats playing banjo at doorbells. Raccoons holding weddings on terraces. “CCTV Captures Cats Saving Kids From Bear Attacks.”

What emerged here was more than a trend. It was a mental tipping point. AI had learned that grainy, shaky footage doesn’t look suspicious — it looks authentic. Poor image quality was no longer a flaw but a stylistic device. The surveillance camera, once a symbol of documentary truth, became a theater backdrop.

2025: The Year of Surreal Everyday Life and Glass Fruit

The Uncanny Becomes a Meme

When reality can be perfectly imitated, creativity often flees into the grotesque. The “You Are What You Eat” trend showed anthropomorphic burgers and pizza people consuming themselves. These videos were intentionally wrong, deliberately uncanny — and successful precisely for that reason. They used the uncanny valley not as a bug, but as a feature.

In parallel, historical iconography became a playground. We saw the Mona Lisa eating pizza, “Leonardo Da Vinci Paints Mona Lisa,” or “Ancient Roman Empire AI video.” The absurd became the new normal.

In 2025, the ironic counter-movement followed: the “2004 Webcam Style.” Intentionally pixelated clips set to pop hits of the 2000s — a digital retro filter that flirted with its own artificiality. In a world of perfect 8K simulation, imperfection became a luxury.

Glass, Fruit, and the New Materiality

Then came the moment that condensed everything. AI videos of glass strawberries, grapefruits, and watermelons that sounded like crystal when cut, but bled like real fruit.

These clips struck something deep. They combined physical impossibility with tactile logic. You could almost taste the shattering. It was ASMR for a world you cannot touch — Dalí’s melting clocks, but in 4K and slow motion. AI video was suddenly not just visual anymore, but sensory.

When Brands Dream — and Stumble

When the industry commercialized the technology, the debate turned emotional. Coca-Cola’s “Holidays Are Coming 2.0” from Christmas 2024 was technically flawless yet sharply criticized. Too polished. Too soulless. Many felt the clip was a betrayal of the nostalgic warmth of the original.

The Toys “R” Us “The Origin” spot showed the other extreme: a soft, dreamlike fever dream of memory. It made clear: we accept AI more readily when it creates surreal worlds than when it tries to simulate our sacred memories.

The Tools of Change: AI Video Platforms in 2026

Today the market has sorted itself out. There are tools for every kind of dreaming: Generative core platforms: OpenAI Sora, Kling AI, Luma Dream Machine, Google Veo. They are the directors of the new visual world. Communicators: HeyGen and Synthesia have effectively demolished language barriers through perfect lip-syncing and video translation. Automators: InVideo AI and Pictory fuel the “faceless channels” on YouTube. Script in, video out. Efficient, but often as interchangeable as fast food. AI doesn’t replace a film crew here. It replaces the barrier to entry. That’s democratic — and gives pause at the same time.

| Plattform | Modell / Basis | Kostenlose Option | Gut geeignet für Trend: |

|---|---|---|---|

| ChatGPT | Sora 2 (OpenAI Modell) | Begrenzte Anzahl für Plus-Nutzer; Gratis-User via Microsoft Rewards / VPN | Identitätsvariationen & filmische Ästhetik |

| Google Gemini | Veo 3.1 (Google Modell) | Monatliches KI-Guthaben (ca. 200 Punkte) in Gemini Advanced | Lippensynchronisation & YouTube-Integration |

| Kling AI | Kling 2.0 | Tägliche Gratis-Credits bei Login | Physikalische Korrektheit (z.B. Flüssigkeiten) |

| Luma Dream Machine | Luma / Sora 2 Preview | Begrenzte monatliche Generations | Fake Memory Footage (VHS-Style) |

| HeyGen / Hedra | Eigene spezialisierte Modelle | 1–5 Credits pro Monat kostenlos | Sprechende Avatare & Selfie-Dialoge |

| Runway | Gen-3 / Gen-4 | Startguthaben für Neuregistrierung | World-Morphing & präzise Kameraführung |

| Grok (xAI) | Grok-3 / X-Video | Gelegentlicher Free-Access für verifizierte Profile; meist X-Premium nötig | Real-Time News Visuals & Ungefilterte Satire |

AI Video Trends in 2026

In 2026, the character of AI videos has fundamentally changed. The technical wow effect has faded; what remains is the aesthetic stance. AI videos no longer need to impress — they need to resonate.

A central trend is identity-playing selfie transformations. From a single photo, alternative versions of the same person emerge: as a future self, as a historical figure, or as an inhabitant of a different reality. Particularly popular are dialogues between “present” and “future” selves. Made possible by significantly improved facial consistency and precise lip-sync.

In parallel, world-morphing is booming — where your own room is transformed in real time into a jungle or film set through video-to-video synthesis. While high-dopamine ultra-shorts create fast entertainment through AI-driven rhythm editing, formless formats without faces are gaining importance simultaneously. Videos from the perspective of everyday objects (object-POV) or surreal material studies work purely sensorially and transcend language barriers.

Another emotional trend is Fake Memory Footage: AI-generated “memories” in VHS or webcam aesthetic play with nostalgia and show that atmospheric credibility often weighs heavier than factual truth. Characteristic of 2026 are long, quiet takes and deliberate reduction. AI video is no longer a mere effect but a precise narrative tool, with human authenticity at its core.

Try It Yourself: Six Prompts That Show What AI Can Do

Prompt 1: Selfie-Based Identity Variations – “Another Possible Me”

What it’s about: From a neutral selfie, an alternative version of you emerges — not as a gag, but as a believable existence in a different reality.

Prompt: Use the uploaded selfie as a reference. Generate a calm, cinematic video of the same person in an alternative reality. The person stands still, looking slightly past the camera. Setting: muted light, realistic materials, believable architecture. No effects, no exaggeration. Camera movement: very slow push-in. Mood: reflective, calm, matter-of-fact. Duration: approx. 8–12 seconds.

(Selfie optional, but recommended)

What to observe: Whether the face remains stable throughout the sequence or subtly “drifts”; whether the scene feels deliberately calm rather than spectacular; and whether that quiet but compelling feeling arises: “That’s me — but somewhere else.” Works with: Sora (via ChatGPT), Runway (Gen-3/Gen-4), Veo (via Google Gemini)

Prompt 2: Selfie-Based Identity Variations – Identity Timelines

What it’s about: No dialogue, no text on screen — just two versions of the same person looking at each other. Time is told visually, not explained.

Prompt: Use the uploaded selfie as a reference. A calm, cinematic split-screen video showing two versions of the same person. Left: the present self. Right: the future self, naturally aged, only subtle changes. Both retain identical facial structure and a similar expression style. They don’t speak. They briefly look at each other, then look away again. The light is soft, neutral, and realistic. The camera remains completely still. Mood: reflective, intimate, quiet.

(Selfie optional, but recommended)

What to observe: How subtle the aging looks; whether both versions clearly remain the same person; and whether the silence is more powerful than words. Works with: Veo (via Google Gemini), Kling AI, HeyGen (simplified version)

Prompt 3: Object-POV — The World from an Object’s Perspective

What it’s about: The camera is no longer an observer — it’s an object quietly participating in everyday life.

Prompt: Generate a video from the perspective of a coffee cup on a kitchen table. Morning light falls through a window, dust particles visible. People move blurred in the background, remaining anonymous. The camera moves minimally with the object’s inertia. No cuts, no music — just visual stillness. Duration: approx. 10 seconds.

What to observe: Whether the camera movement feels physically plausible; whether the scene remains interesting despite lacking action; and whether a quiet intimacy emerges. Works with: Luma, Runway

Prompt 4: Material Study – “Impossible Material, Real Logic”

What it’s about: An object behaves in a materially contradictory way, but remains visually consistent.

Prompt: Show a strawberry made entirely of clear glass. A blade slowly cuts through it. The glass splinters audibly, while red juice simultaneously flows out. Macro shot, calm light, neutral setting. No camera cuts — focus on texture and movement. Duration: approx. 6–8 seconds.

What to observe: Whether the breaking, sound, and movement fit together coherently; whether the impossibility still feels logical within the scene; and whether the video thereby produces an almost tactile, “touchable” effect. Works with: Luma, Runway, Veo (via Gemini, if available)

Prompt 5: Memory Footage – “A Memory That Never Existed”

What it’s about: The AI generates a familiar memory that feels emotionally credible, independent of truth.

Prompt: Generate a video in the style of an old VHS home recording. Scene: summer afternoon, children running through a garden, blurry and casual. Colors slightly washed out, visible image noise, mild overexposure. The camera feels clumsily operated. No clear faces, no focus on individuals. Duration: approx. 10–15 seconds.

What to observe: Whether the video evokes nostalgia even though you know it’s fabricated; whether the scene feels more “remembered” than staged; and whether the blurriness enhances its credibility. Works with: Luma

Prompt 6: Quiet Narrative – “The Silence”

What it’s about: This trend is about omission. A single shot that tells a story through light and shadow, without much happening.

Prompt: Uninterrupted shot of an empty wooden chair in a dark room. A single beam of light slowly moves across the wood grain. Dust particles dance in the light. At the end of the video, a soft shadow of a person falls on the floor — someone you never see. Melancholic, aesthetic, still. Duration: approx. 10 seconds.

What to observe: The interplay of light and shadow and the subtlety of the dust particles. The emotional effect is created purely through composition. Works with: Google Gemini (Veo 3.1) or Sora 2

Conclusion: The End of Innocence

AI videos have not simply lied to us over these ten years. They have shown us how unconditionally we used to believe in images. The camera is not dead, but it is no longer innocent. The image is no longer proof — it is a claim.

Perhaps this is the most important insight of January 2026: not the machines learned to make images — we learned to read them anew. We no longer just look; we examine, we feel, we decide afresh what moves us. The question is no longer: “Is this real?” But: “Does it touch me?”

And now if you’ll excuse me. I need to watch once more how those raccoons celebrate their wedding on the terrace. It’s generated. But damn, they look happy.

This article was created with the assistance of Claude.ai Sonnet 4.5. The embedded AI videos are linked via YouTube and were all generated using various specialized AI video generators.

Have you tested the prompts? What was your result — let me know in the comments.